ViST is a complete Vision Transformer implemented entirely in C++ using only the standard library and stb_image. It classifies images into three categories using a from-scratch patching, attention, and classification pipeline — no frameworks, no external ML libs.

Clone and build with CMake:

git clone https://github.com/allanhanan/ViST.git

cd ViST

mkdir build

cd build

cmake ..

makeTo train the model:

./ViTTo test on a saved model and image:

./ViT /path/to/model_checkpoint.bin /path/to/image.png

ViST is a fully hand-built Vision Transformer implemented in C++ using only the standard library (plus stb_image for image I/O). It reconstructs the entire ViT image-classification pipeline from first principles: loading and preprocessing images, splitting into patches, adding positional encodings, applying Transformer blocks (self-attention + feedforward layers), and classifying with a linear head.

All matrix and vector operations (dot products, normalization, activations, etc.) are coded manually with std::vector loops,

and even a basic backpropagation and optimizer are implemented by hand. This pure-CPU, no-framework design is for me to test my own skills and to understand how a transformer works

Below is a breakdown of the architecture, file responsibilities, and implementation details.

stb_image. It’s resized to a fixed size (like 300×300) using nearest-neighbor and normalized to [0,1].16×16×3.The entire pipeline is load → patch → encode → transform → classify.

image_loader.hppHandles image loading and resizing using stb_image. Returns a normalized 3D vector (H×W×3). Uses nearest-neighbor interpolation. Includes:

load_image() – loads, normalizes, checks shaperesize_image() – pure CPU-based resizing (nearest)image_utils.hppHas augmentation functions like flips, rotations, brightness — but these aren’t used in training yet. Intended for expansion.

patch_embedding.cpp

Extracts 16×16 patches from an image. Each patch is flattened into a 1D float vector (RGB interleaved).

Stored in a vector<vector<float>>.

create_patch_embedding() loops through image and builds patch embeddings.positional_encoding.cppBuilds sinusoidal encodings per patch (same method as original Transformer). Adds these encodings element-wise to each patch vector. Scaling factor is 0.1. Functions include:

create_positional_encoding(num_patches, dim)add_positional_encoding(patches, encoding)utils.hppThis file is where the math lives. Matrix ops, layer norm, GELU, random init, softmax, loss, etc.

matmul, transpose, add_matrices, scalar_multiplylinear_transform = does XW + b with triple loopslayer_norm = standard zero-mean, unit-variance + gamma/betagelu = exact GELU (with tanh)random_vector, random_matrix – uniform weight initsoftmax_vector, calculate_loss, add_biasmulti_head_attention.hppDefines the multi-head self-attention operation. It computes Q, K, V projections, splits into heads, performs scaled dot-product, and projects the result back. The catch? No softmax. Scores are scaled and clamped instead.

multi_head_attention() – takes in input, weights, biases, number of heads, d_k, d_vlinear_transformThis mostly does what you’d expect from attention, minus the softmax. Logs Q/K/V sizes to help debug.

feedforward.hppImplements the 2-layer MLP inside each transformer block.

feedforward_network(input, W1, b1, W2, b2)transformer_block.cpp

Class wrapper for a single Transformer block. Instantiates attention weights, FFN weights, and layer norm params.

The forward() function applies everything in order:

It follows the standard Transformer sub-layer logic, with shape checks and logs.

vit_model.hppThis is where the full Vision Transformer model is defined. It combines together image loading, patching, encoding, transformer stack, and final classification.

vision_transformer(image_path, num_classes, num_blocks) – main entryload_image()create_patch_embedding()[CLS] tokenNote: this head is not trained. The second classifier in VitModelWrapper is trained instead.

training_utils.hppUtilities for training logic — loss, dropout, gradient update, early stopping.

cross_entropy_loss() – manual softmax + log lossbackpropagate_classifier() – computes gradient for classifier weightsupdate_classifier_weights() – basic gradient descent with optional clippingdropout() – not used yet but implementedEarlyStopping – struct that tracks loss and patiencegradients.hppLow-level backpropagation logic. This file prepares for full gradient descent through layers, but only the classifier backprop is wired up currently.

backprop_linear_transform() – computes dX, dW, db for a linear layergelu_derivative(), gelu_gradient() – for FFN backpropoptimizers.hppManual Adam optimizer for weights and biases.

adam_update_matrix(), adam_update_vector()checkpoint.hppHandles saving and loading model checkpoints in binary. This only covers the classifier layer and Adam optimizer state — transformer weights aren’t stored.

save_checkpoint() – writes classifier weights, bias, epoch, Adam m/v vectorsload_checkpoint() – restores them with dimension checksSimple binary format, used after every training epoch.

train.cppThe main training logic. Runs through all images in a folder, applies the model, computes loss, and updates the classifier via Adam. Batches are processed in parallel.

apple/, banana/, etc.)std::asyncbackpropagate() to compute gradient for classifierNote: Only the final classifier is trained. The actual Vision Transformer blocks are frozen random weights.

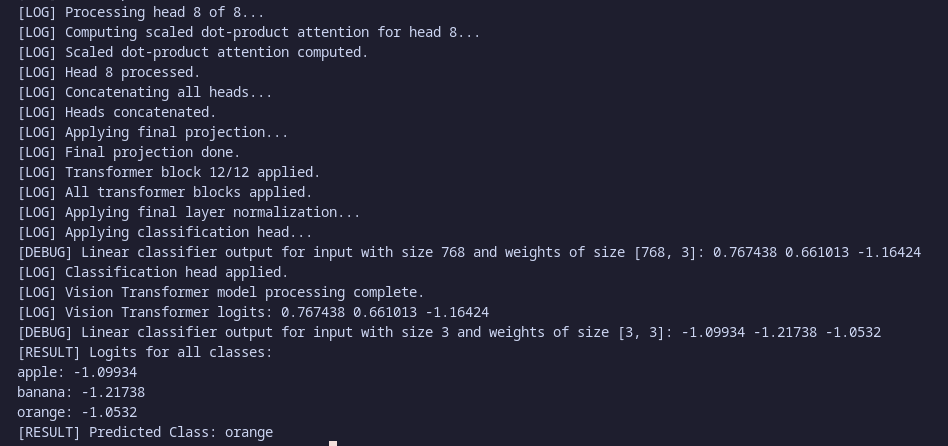

test.cppSimple inference code. Loads a model checkpoint and an image, runs a forward pass, and prints logits + predicted class.

test_model(checkpoint_file, image_path)main.cpp

CLI entry point. If no args: trains from ../train. If args are provided: loads checkpoint and runs test using 12 blocks (not consistent).

./ViT model.bin image.png → testTriggered by running ./ViT with no arguments. This launches the full training pipeline in plain C++:

../train directory for subfolders (apple, banana, orange)std::asyncprocess_batch() on its assigned samplesstd::mutexstb_image to decode into raw RGB datalinear_transform)Q · Kᵗ / √d_klayer_norm applied (zero mean/unit variance)layer_norm finishes the blocklogits = W_cls · [CLS] + b_clsW and bias b receive gradientsm, v) tracked per parameterm̂ = m / (1 - β₁ᵗ), v̂ = v / (1 - β₂ᵗ)param -= lr × m̂ / (√v̂ + ε)save_checkpoint() writes a binary file containing:m/v for weights and biasTriggered by running ./ViT model_checkpoint.bin image.png

load_checkpoint()

All matrix operations are explicit — no Eigen or BLAS. For example, matmul(A, B) is done with triple nested loops. It’s slow but makes the math very clear.

A few hacks help avoid numeric instability:

Some headers include their `.cpp` counterparts inline (like vit_model.hpp including transformer_block.cpp). Not typical C++ structure, but works for a bundled setup.

While ViST was built to push me to achive what’s possible with just the C++ standard library, there are still a few practical and architectural limitations (i.e skill issues) worth noting:

train.cpp assumes class folders are apple, banana, orangemain.cpp uses different transformer block counts for train vs. teststd::vector. There’s no GPU acceleration or SIMD usage, so larger models will be very slow.These aren’t dealbreakers, most are by design for simplicity. But worth keeping in mind if you plan to try this and contribute (would be great)

There’s a lot of stuff to add, improve, rework but theres some that’s more important to make it a somewhat polished code.

matmul, linear layers, GELU, etc.) to run on the GPU for massive speedups. The current nested-loops are just too slow on CPU.stb_image: Implement a custom PNG/JPEG decoder to remove the only external dependency and actually stick to “standard library only” if that’s still the goal anymore atp.apple and banana.This version of ViST was already a wild ride, but taking it one step further would turn it into a fully usable transformer or a minimal production-grade ViT prototype in C++.

ViST rebuilds a full Vision Transformer from scratch using just C++ and stb_image. It patches, encodes, transforms, and classifies images through custom layers — no frameworks, no ML libs, no GPU, and no braincells.

Everything is hand-written (i'll change the image loader, then this will be true). There’s a Transformer model with layer norm and attention, positional encodings, and even model checkpointing — all working, all clean(?)

The only thing that isn’t wired in is full end-to-end gradient descent — right now, just the final classifier gets trained. The rest stays frozen.

Still, this was a great way for me to learn how Transformers work even if it is full of holes and tons of tech debt for any future changes.

Comments